Qualcomm AI200 - Rack-Scale Inference ASIC

Qualcomm AI200 specs and analysis - a Hexagon-based inference accelerator with 768GB LPDDR per card, rack-scale design, and a focus on inference TCO.

Qualcomm AI200 specs and analysis - a Hexagon-based inference accelerator with 768GB LPDDR per card, rack-scale design, and a focus on inference TCO.

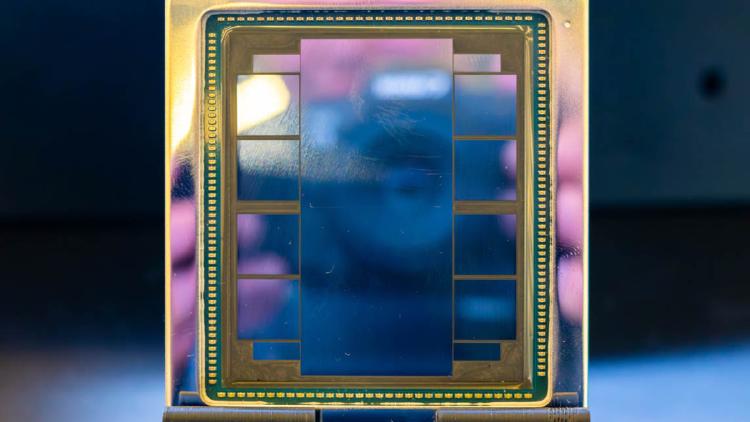

Complete specs and analysis of SambaNova's SN50 RDU - a TSMC 3nm dataflow chip with 3.2 PFLOPS FP8, three-tier memory, and claimed 5x speed over NVIDIA B200.

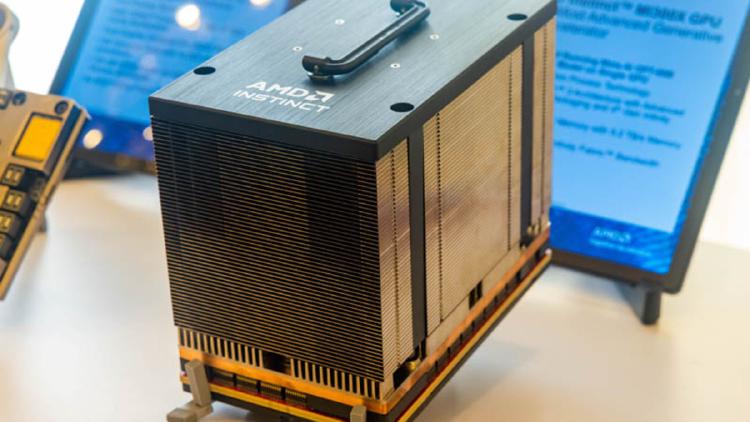

AMD Instinct MI300X specs, benchmarks, and real-world performance data. 192GB HBM3, 5,300 GB/s bandwidth, 2,610 TFLOPS FP8 on CDNA 3 chiplet architecture.

AMD Instinct MI350X specs and performance estimates. 288GB HBM3e, ~6,000 GB/s bandwidth, ~3,600 TFLOPS FP8 on CDNA 4 architecture at TSMC 3nm.

Full specs and benchmarks for the Apple M4 Max SoC - up to 128GB unified memory at 546 GB/s, 3nm process, and why it has become the quiet favorite for running 70B+ models locally.

AWS Trainium2 is Amazon's second-generation custom AI training chip, powering EC2 Trn2 instances with 96GB HBM2e per chip and tight integration with the AWS Neuron SDK and SageMaker ecosystem.