Cambricon MLU590 - China's Inference Accelerator

Full specs and analysis of the Cambricon MLU590 - 192GB HBM2e, ~2,400 GB/s bandwidth, TSMC 7nm, and what it means for AI inference outside the NVIDIA ecosystem.

Full specs and analysis of the Cambricon MLU590 - 192GB HBM2e, ~2,400 GB/s bandwidth, TSMC 7nm, and what it means for AI inference outside the NVIDIA ecosystem.

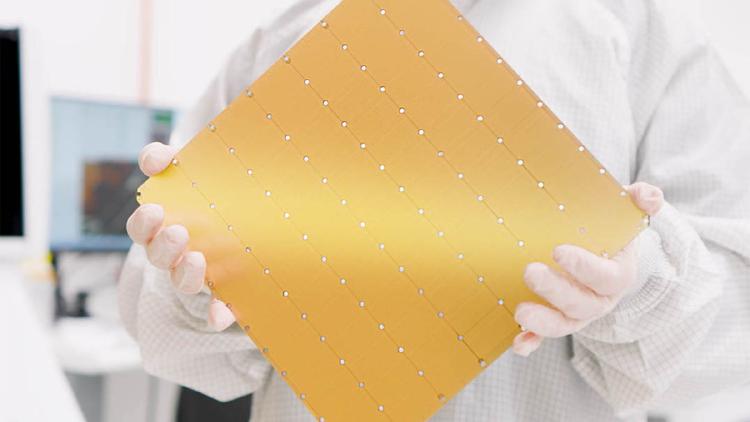

The Cerebras Wafer-Scale Engine 3 is the largest chip ever built - an entire TSMC 5nm wafer with 900,000 AI cores, 44GB of on-chip SRAM, and 21 PB/s of memory bandwidth powering the CS-3 AI supercomputer.

Google Cloud TPU v6e Trillium specs, benchmarks, and pricing. 32GB HBM per chip, ~1,600 GB/s bandwidth, optimized for Transformer training and inference at cloud scale.

Google TPU v7 Ironwood specs, architecture, and performance estimates. Google's next-gen inference-optimized TPU with massive memory per chip, announced at Cloud Next 2025.

Groq's Language Processing Unit (LPU) is a purpose-built inference ASIC that trades HBM for 230MB of on-chip SRAM, delivering deterministic latency and record-breaking tokens-per-second for LLM serving.

Huawei Ascend 910B specs, benchmarks, and real-world performance. 64GB HBM2e, ~1,200 GB/s bandwidth, ~600 TFLOPS FP16 - the chip that trained DeepSeek.