OBLITERATUS Strips AI Safety From Open Models in Minutes

A new open-source toolkit called OBLITERATUS can surgically remove refusal mechanisms from 116 open-weight LLMs using abliteration - no fine-tuning, no training data, just geometry.

A new open-source toolkit called OBLITERATUS can surgically remove refusal mechanisms from 116 open-weight LLMs using abliteration - no fine-tuning, no training data, just geometry.

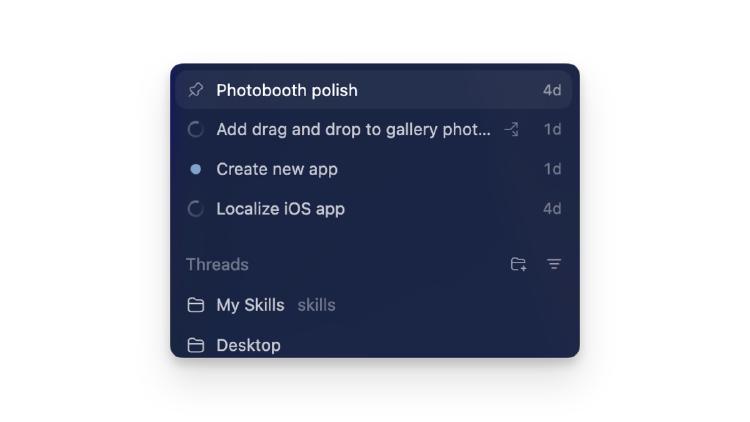

OpenAI's Codex desktop app hits the Microsoft Store with native Windows sandboxing, PowerShell agents, WinUI skills, and multi-agent parallel execution - no WSL required.

Arc Institute and NVIDIA release Evo 2, a 40B-parameter open-source AI trained on 9.3 trillion nucleotides from every domain of life, with full weights, code, and training data.

Researchers from ETH Zurich and Anthropic show that LLM agents can strip pseudonymity from forum posts at scale for as little as $1.41 per target - matching what human investigators could do in hours.

Andrew Ng says AGI is decades away and the real AI bubble risk is in the training layer - not inference. We examine both claims against the data.

Apple's cheapest Mac ever packs the A18 Pro iPhone chip with a 16-core Neural Engine - but its 60 GB/s memory bandwidth puts a hard ceiling on what local models you can actually run.