Meta MTIA 300

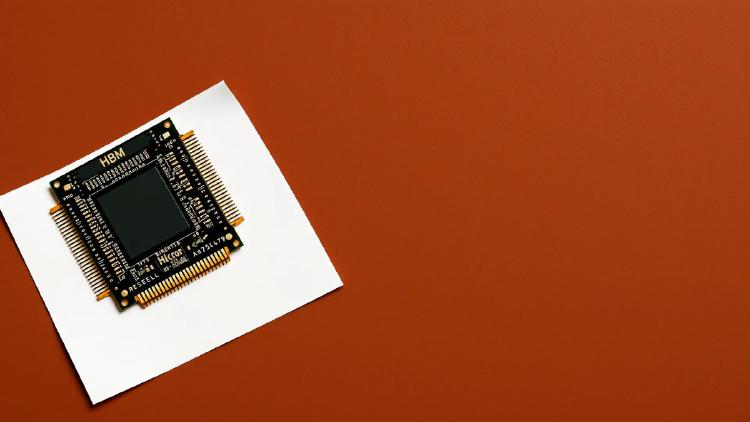

Meta's first mass-deployed RISC-V AI accelerator - 1.2 PFLOPS FP8, 216 GB HBM, powering Facebook and Instagram at scale.

Meta's first mass-deployed RISC-V AI accelerator - 1.2 PFLOPS FP8, 216 GB HBM, powering Facebook and Instagram at scale.

Full specs and critical analysis of the Etched Sohu - a transformer-specific ASIC claiming 500K+ tokens/sec on Llama 70B, built on TSMC 4nm with 144GB HBM3E. Bold claims, but no independent benchmarks yet.

Qualcomm AI200 specs and analysis - a Hexagon-based inference accelerator with 768GB LPDDR per card, rack-scale design, and a focus on inference TCO.

Complete specs and analysis of SambaNova's SN50 RDU - a TSMC 3nm dataflow chip with 3.2 PFLOPS FP8, three-tier memory, and claimed 5x speed over NVIDIA B200.

AWS Trainium2 is Amazon's second-generation custom AI training chip, powering EC2 Trn2 instances with 96GB HBM2e per chip and tight integration with the AWS Neuron SDK and SageMaker ecosystem.

Full specs and analysis of the Cambricon MLU590 - 192GB HBM2e, ~2,400 GB/s bandwidth, TSMC 7nm, and what it means for AI inference outside the NVIDIA ecosystem.