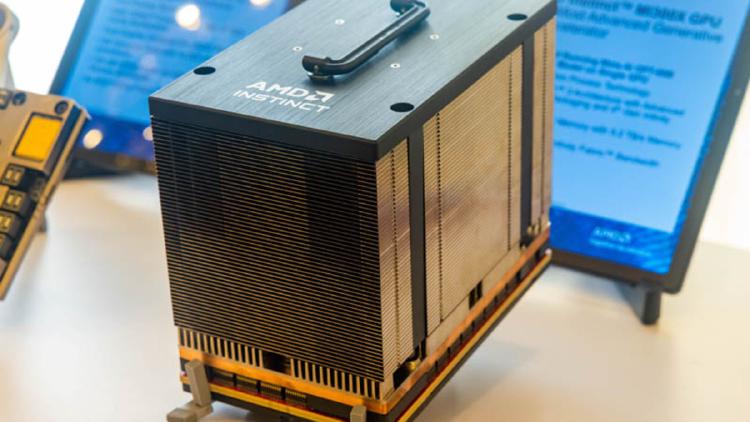

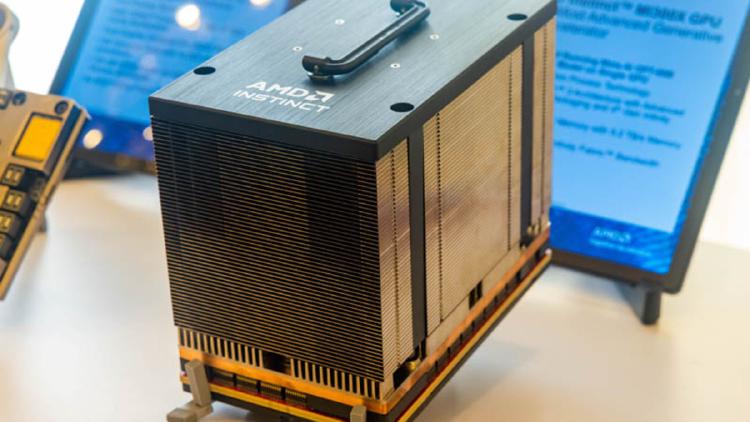

AMD Instinct MI300X

AMD Instinct MI300X specs, benchmarks, and real-world performance data. 192GB HBM3, 5,300 GB/s bandwidth, 2,610 TFLOPS FP8 on CDNA 3 chiplet architecture.

AMD Instinct MI300X specs, benchmarks, and real-world performance data. 192GB HBM3, 5,300 GB/s bandwidth, 2,610 TFLOPS FP8 on CDNA 3 chiplet architecture.

AMD Instinct MI350X specs and performance estimates. 288GB HBM3e, ~6,000 GB/s bandwidth, ~3,600 TFLOPS FP8 on CDNA 4 architecture at TSMC 3nm.

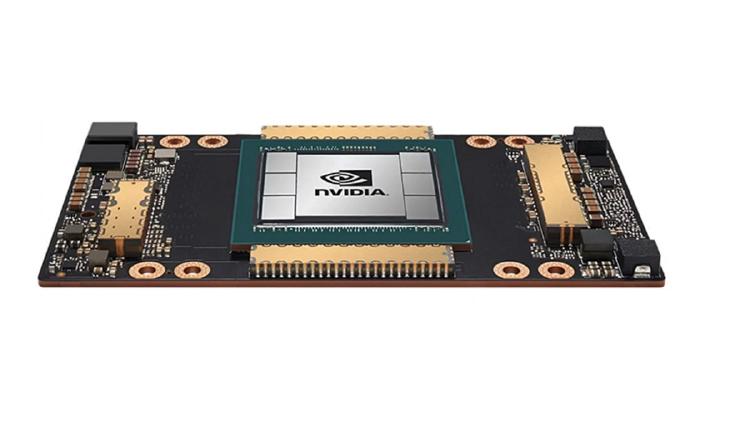

Complete specs, benchmarks, and analysis of the NVIDIA A100 80GB SXM - the Ampere-architecture GPU that remains the most widely deployed AI accelerator in the world.

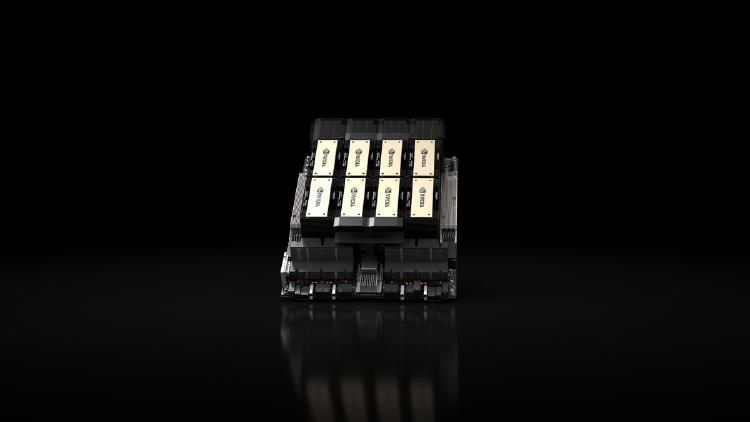

Complete specs, benchmarks, and analysis of the NVIDIA B200 - the Blackwell-architecture flagship GPU with 192GB HBM3e, 8 TB/s bandwidth, and up to 9,000 TFLOPS FP8.

Complete specs, benchmarks, and analysis of the NVIDIA H100 SXM - the Hopper-architecture GPU that defined the standard for AI training and inference performance.

Complete specs, benchmarks, and analysis of the NVIDIA H200 - the HBM3e-equipped Hopper GPU that delivers 76% more memory and 43% more bandwidth than the H100 for inference workloads.