DeepSeek V4 vs Kimi K2.5 - China's Trillion-Parameter MoE Duel

Two Chinese open-weight trillion-parameter MoE models with ~32B active parameters each - DeepSeek V4 bets on cost and context, Kimi K2.5 bets on Agent Swarm and verified benchmarks.

Two Chinese open-weight trillion-parameter MoE models with ~32B active parameters each - DeepSeek V4 bets on cost and context, Kimi K2.5 bets on Agent Swarm and verified benchmarks.

A pre-release comparison of DeepSeek V3.2 and V4 - examining the generational leap from 671B text-only to a trillion-parameter natively multimodal model with 1M context.

DeepSeek V4 is an unreleased trillion-parameter MoE model with ~32B active parameters, native multimodal capabilities, a 1M-token context window, and optimization for Huawei Ascend chips - expected in the first week of March 2026.

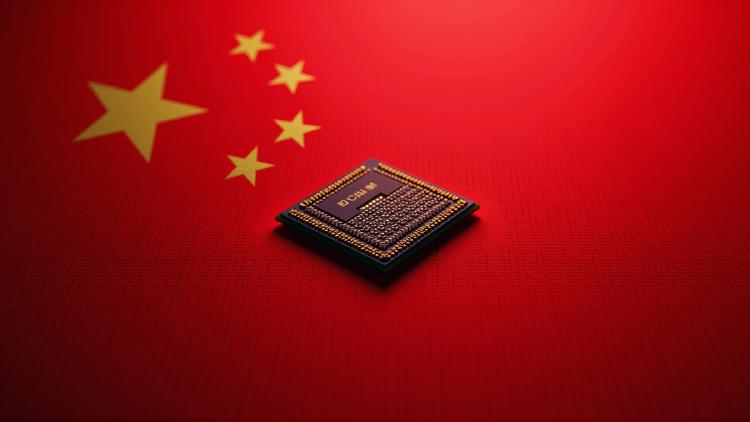

DeepSeek will release V4, a natively multimodal trillion-parameter model with a 1M token context window, in the first week of March - optimized for Huawei Ascend chips, not Nvidia.

Huawei Ascend 910B specs, benchmarks, and real-world performance. 64GB HBM2e, ~1,200 GB/s bandwidth, ~600 TFLOPS FP16 - the chip that trained DeepSeek.

DeepSeek has denied Nvidia and AMD pre-release access to its upcoming V4 model while granting Huawei and domestic Chinese chipmakers a multi-week optimization window, signaling a strategic pivot toward building a parallel AI software ecosystem on Chinese silicon.