Nemotron 3 Nano 4B: NVIDIA Edge Model Runs on 8GB

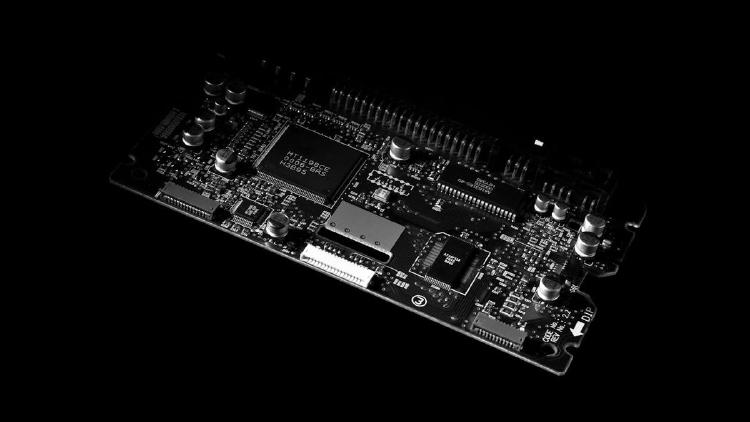

NVIDIA's Nemotron 3 Nano 4B packs a Mamba-dominant hybrid architecture, 262K token context, and 95.4% on MATH500 into a model that fits an 8GB Jetson Orin Nano.

NVIDIA's Nemotron 3 Nano 4B packs a Mamba-dominant hybrid architecture, 262K token context, and 95.4% on MATH500 into a model that fits an 8GB Jetson Orin Nano.

IBM's new 1B-parameter speech model claims the top spot on the Open ASR Leaderboard while running on consumer hardware, beating Whisper Large V3 by 25% on word error rate.

AMD expands its Ryzen AI Embedded P100 family with six new 8-to-12-core processors delivering 80 system TOPS, targeting industrial automation, robotics, and medical imaging.

Alibaba completes the Qwen 3.5 lineup with four small models - 0.8B, 2B, 4B, and 9B - all natively multimodal, 262K context, Apache 2.0. The 9B outperforms last-gen Qwen3-30B and beats GPT-5-Nano on vision benchmarks.

Qwen3.5-0.8B is the smallest natively multimodal model in the Qwen 3.5 family - 0.8B parameters handling text, images, and video with 262K context. MathVista 62.2, OCRBench 74.5. Apache 2.0.

Qwen3.5-2B is a 2B dense multimodal model with 262K context, thinking mode, and native vision including video understanding. OCRBench 84.5, VideoMME 75.6. Apache 2.0 licensed.