LLM API Pricing Comparison - March 2026

Side-by-side LLM API pricing for GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, DeepSeek V3.2, Grok 4, and 30+ models normalized to cost per million tokens.

Side-by-side LLM API pricing for GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, DeepSeek V3.2, Grok 4, and 30+ models normalized to cost per million tokens.

NVIDIA will unveil a new inference processor built on Groq's LPU architecture at GTC 2026, with OpenAI as its first major customer allocating 3 GW of dedicated capacity.

Awesome Agents launches a dedicated Hardware section with detailed spec pages for 21 GPUs, TPUs, and AI accelerators - from datacenter flagships to home lab favorites.

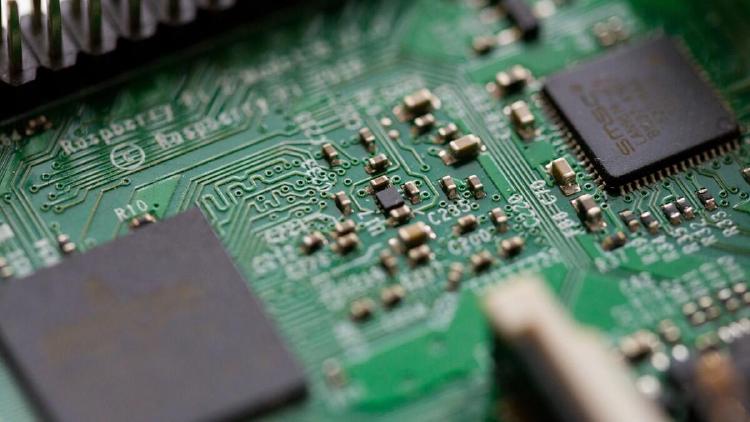

Groq's Language Processing Unit (LPU) is a purpose-built inference ASIC that trades HBM for 230MB of on-chip SRAM, delivering deterministic latency and record-breaking tokens-per-second for LLM serving.

Groq's LPU chips deliver inference speeds that make GPUs look slow - 1,200+ tokens per second on Llama 4. We benchmark latency, throughput, model availability, and pricing against the GPU-based competition.