Nemotron 3 Nano 4B: NVIDIA Edge Model Runs on 8GB

NVIDIA's Nemotron 3 Nano 4B packs a Mamba-dominant hybrid architecture, 262K token context, and 95.4% on MATH500 into a model that fits an 8GB Jetson Orin Nano.

NVIDIA's Nemotron 3 Nano 4B packs a Mamba-dominant hybrid architecture, 262K token context, and 95.4% on MATH500 into a model that fits an 8GB Jetson Orin Nano.

Meta published a four-generation MTIA silicon roadmap delivering chips every six months through 2027, with compute scaling 25x from MTIA 300 to MTIA 500.

Apple's cheapest Mac ever packs the A18 Pro iPhone chip with a 16-core Neural Engine - but its 60 GB/s memory bandwidth puts a hard ceiling on what local models you can actually run.

NVIDIA will unveil a new inference processor built on Groq's LPU architecture at GTC 2026, with OpenAI as its first major customer allocating 3 GW of dedicated capacity.

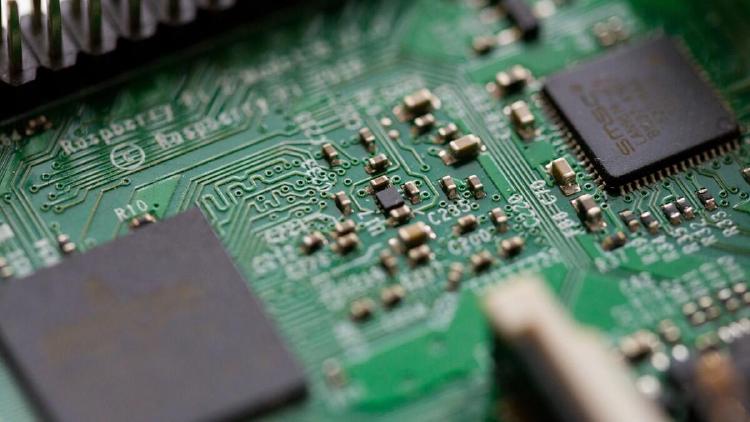

Awesome Agents launches a dedicated Hardware section with detailed spec pages for 21 GPUs, TPUs, and AI accelerators - from datacenter flagships to home lab favorites.

Two very different approaches to desktop AI hardware - a 32 GB eGPU with 1,792 GB/s bandwidth versus a 128 GB unified memory mini PC with full CUDA. Which one should you buy?