AWS Trainium3 - Amazon's 3nm AI Accelerator

Complete specs, benchmarks, and analysis of AWS Trainium3 - Amazon's TSMC 3nm AI chip with 2.52 PFLOPS FP8, 144GB HBM3e, and NeuronLink-v4, powering Anthropic's Claude through Project Rainier.

Complete specs, benchmarks, and analysis of AWS Trainium3 - Amazon's TSMC 3nm AI chip with 2.52 PFLOPS FP8, 144GB HBM3e, and NeuronLink-v4, powering Anthropic's Claude through Project Rainier.

Full specs and critical analysis of the Etched Sohu - a transformer-specific ASIC claiming 500K+ tokens/sec on Llama 70B, built on TSMC 4nm with 144GB HBM3E. Bold claims, but no independent benchmarks yet.

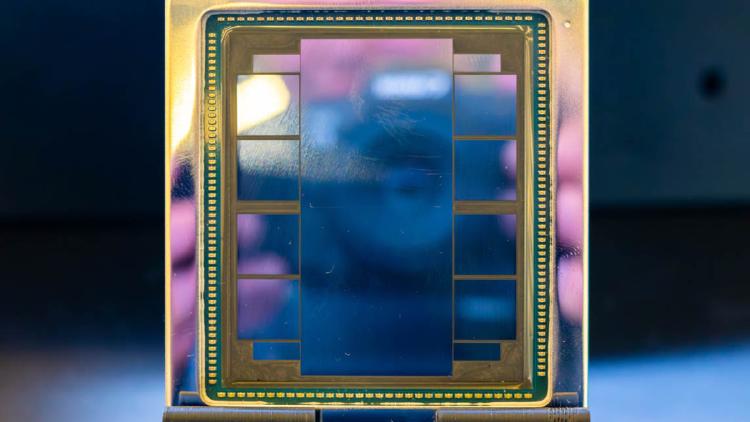

AMD Instinct MI350X specs and performance estimates. 288GB HBM3e, ~6,000 GB/s bandwidth, ~3,600 TFLOPS FP8 on CDNA 4 architecture at TSMC 3nm.

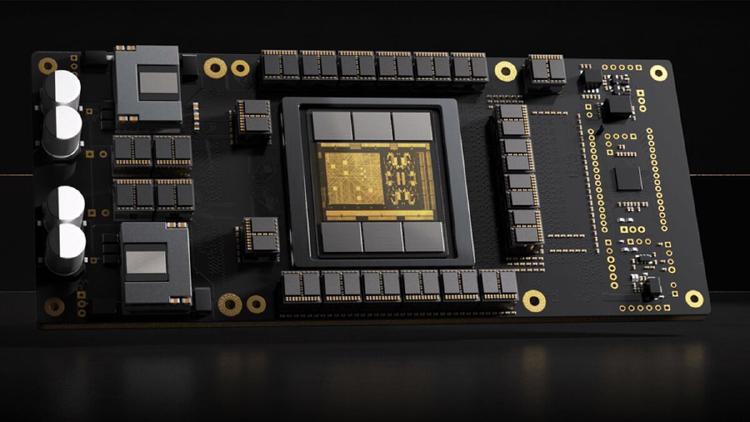

Complete specs, benchmarks, and analysis of the NVIDIA B200 - the Blackwell-architecture flagship GPU with 192GB HBM3e, 8 TB/s bandwidth, and up to 9,000 TFLOPS FP8.

Complete specs, benchmarks, and analysis of the NVIDIA GB200 NVL72 - the 72-GPU rack-scale Blackwell system delivering 1,440 PFLOPS FP4 for trillion-parameter AI training and inference.

Complete specs, benchmarks, and analysis of the NVIDIA GB300 NVL72 - the Blackwell Ultra rack-scale system with 288GB HBM3e per GPU, 1.5x more FP4 compute, and 2x attention performance over GB200.