Nemotron-Cascade 2: 30B Open MoE, One GPU, Beats 120B

NVIDIA's new Nemotron-Cascade-2-30B-A3B activates just 3B parameters per token, runs on a single RTX 4090, and outscores NVIDIA's own 120B model on coding and math benchmarks.

NVIDIA's new Nemotron-Cascade-2-30B-A3B activates just 3B parameters per token, runs on a single RTX 4090, and outscores NVIDIA's own 120B model on coding and math benchmarks.

NVIDIA's Nemotron 3 Nano 4B packs a Mamba-dominant hybrid architecture, 262K token context, and 95.4% on MATH500 into a model that fits an 8GB Jetson Orin Nano.

Microsoft Azure's Foundry platform now runs Fireworks AI's inference engine, bringing DeepSeek V3.2, Kimi K2.5, and MiniMax M2.5 into enterprise AI under a unified control plane.

NVIDIA opens GTC 2026 with the Vera Rubin platform - six co-designed chips delivering 50 PFLOPS of inference per GPU and 10x lower token cost than Blackwell.

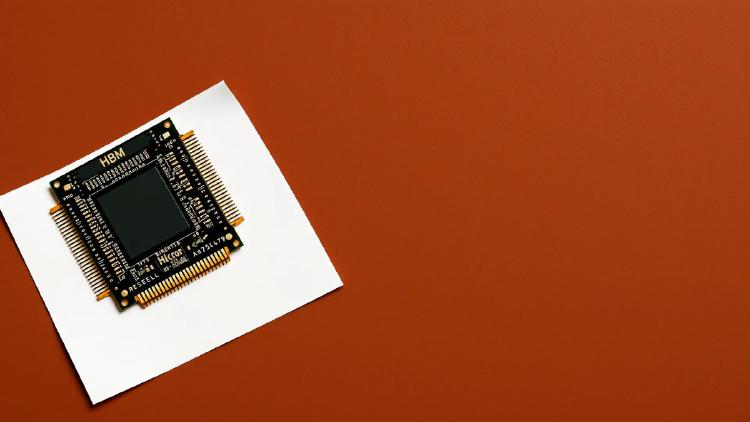

Meta's first mass-deployed RISC-V AI accelerator - 1.2 PFLOPS FP8, 216 GB HBM, powering Facebook and Instagram at scale.

Rankings of the fastest AI models and inference providers by tokens per second, time to first token, and end-to-end latency.