Cerebras WSE-3 - The Wafer-Scale AI Engine

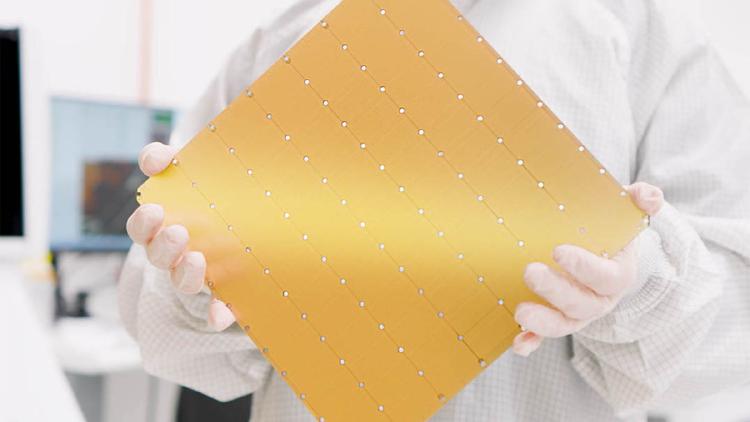

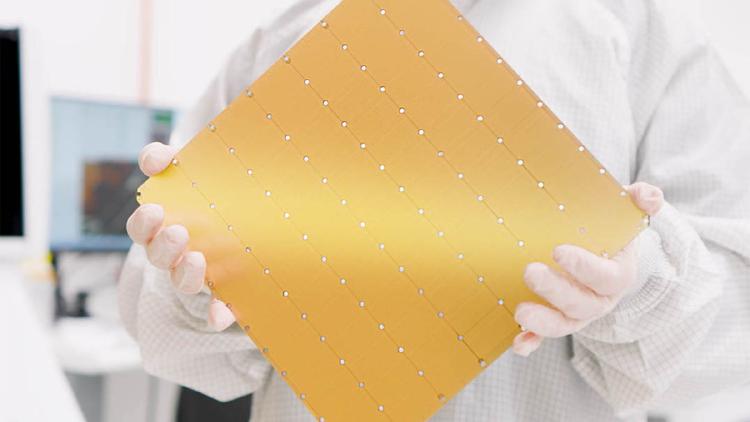

The Cerebras Wafer-Scale Engine 3 is the largest chip ever built - an entire TSMC 5nm wafer with 900,000 AI cores, 44GB of on-chip SRAM, and 21 PB/s of memory bandwidth powering the CS-3 AI supercomputer.

The Cerebras Wafer-Scale Engine 3 is the largest chip ever built - an entire TSMC 5nm wafer with 900,000 AI cores, 44GB of on-chip SRAM, and 21 PB/s of memory bandwidth powering the CS-3 AI supercomputer.

Google TPU v7 Ironwood specs, architecture, and performance estimates. Google's next-gen inference-optimized TPU with massive memory per chip, announced at Cloud Next 2025.

Groq's Language Processing Unit (LPU) is a purpose-built inference ASIC that trades HBM for 230MB of on-chip SRAM, delivering deterministic latency and record-breaking tokens-per-second for LLM serving.

Intel Gaudi 3 is a TSMC 5nm AI accelerator with 128GB HBM2e and 1,835 TFLOPS FP8 performance, positioned as a cost-effective alternative to NVIDIA H100 for training and inference workloads.

Complete specs, benchmarks, and analysis of the NVIDIA A100 80GB SXM - the Ampere-architecture GPU that remains the most widely deployed AI accelerator in the world.

Complete specs, benchmarks, and analysis of the NVIDIA B200 - the Blackwell-architecture flagship GPU with 192GB HBM3e, 8 TB/s bandwidth, and up to 9,000 TFLOPS FP8.