Interpretability Limits, Dark Models, Persona Traps

Three new papers expose a gap between what AI models know and what they do - and why that gap is harder to close than anyone assumed.

Three new papers expose a gap between what AI models know and what they do - and why that gap is harder to close than anyone assumed.

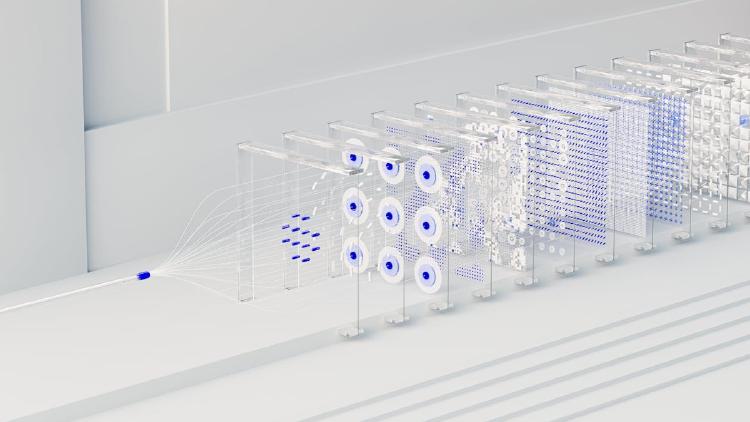

OpenAI's CoT-Control benchmark shows frontier reasoning models score 0.1-15.4% at steering their own chain of thought - a result the company frames as good news for AI oversight.

Three new papers tackle agent reliability through formal contracts, active knowledge acquisition for memory systems, and provably stable mechanistic interpretability.

YC-backed startup Guide Labs releases Steerling-8B under Apache 2.0 - an 8.4B parameter model with a built-in concept module that traces every output token back to its training data.