Best LLM Eval Tools in 2026: 6 Options Tested

A data-driven comparison of DeepEval, Braintrust, Langfuse, LangSmith, Inspect AI, and RAGAS - the top LLM evaluation frameworks for teams building AI in production.

A data-driven comparison of DeepEval, Braintrust, Langfuse, LangSmith, Inspect AI, and RAGAS - the top LLM evaluation frameworks for teams building AI in production.

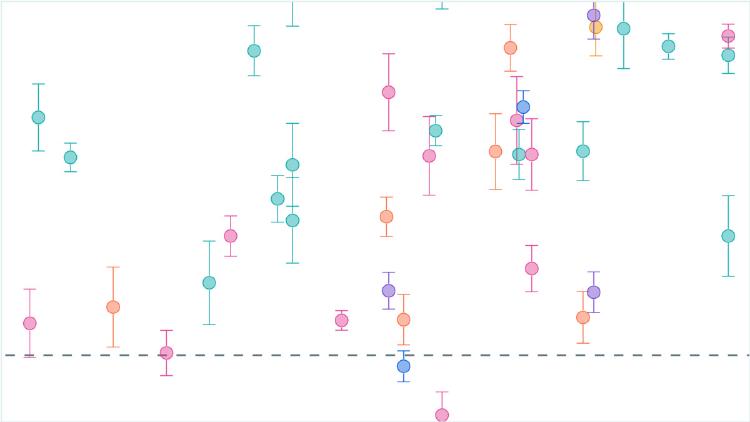

Three new papers expose cracks in how AI models think, how benchmarks evaluate multimodal reasoning, and why LLM judges reliably mislead.

A data-driven look at benchmark contamination, leaderboard gaming, and whether public AI benchmarks can still tell us anything useful about model capabilities.