MiniMax M2.7 Claims to Automate Its Own Training

MiniMax's new 2,300B MoE model tops the Artificial Analysis Intelligence Index and claims to run 30-50% of its own RL research workflow autonomously.

MiniMax's new 2,300B MoE model tops the Artificial Analysis Intelligence Index and claims to run 30-50% of its own RL research workflow autonomously.

A 1-trillion-parameter model called Hunter Alpha appeared anonymously on OpenRouter on March 11. Developers say it's DeepSeek V4 in disguise. The signals are strong but the precedent cuts both ways.

Arc Institute and NVIDIA release Evo 2, a 40B-parameter open-source AI trained on 9.3 trillion nucleotides from every domain of life, with full weights, code, and training data.

Inception Labs' Mercury 2 hits 1,196 tokens per second in independent testing - a diffusion architecture that rewires how inference works.

DeepSeek will release V4, a natively multimodal trillion-parameter model with a 1M token context window, in the first week of March - optimized for Huawei Ascend chips, not Nvidia.

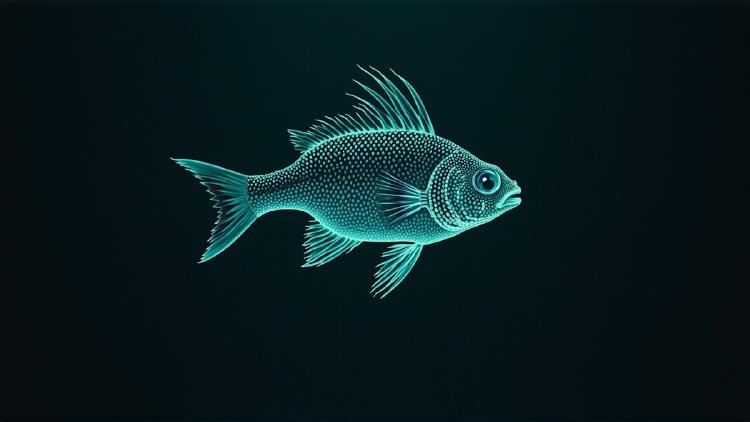

DeepSeek's V4 Lite model has leaked through inference provider testing under strict NDAs, revealing a 1M token context window, native multimodal capabilities, and the internal codename sealion-lite.