Mistral Small 4

Mistral AI's unified MoE model - 119B total parameters, 6B active per token, 128 experts, 256K context, configurable reasoning, Apache 2.0 license.

Mistral AI's unified MoE model - 119B total parameters, 6B active per token, 128 experts, 256K context, configurable reasoning, Apache 2.0 license.

Mistral AI releases Small 4 - a 119B MoE with only 6B active parameters, 256K context, configurable reasoning, and Apache 2.0 license. Plus a new NVIDIA partnership to co-develop frontier open models.

Kraken launched an open-source Rust CLI with built-in MCP server that lets AI agents like Claude Code and Codex trade crypto, manage portfolios, and paper-trade against live markets.

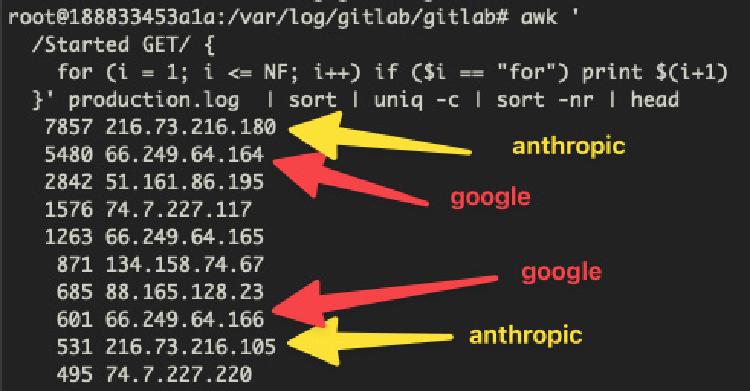

AI training crawlers from Anthropic, Google, and OVHcloud knocked a French national research institute's GitLab server offline for hours - nobody at CNRS's Institute of Complex Systems could work.

LangChain's open-source Deep Agents framework brings planning, subagents, and persistent context to autonomous agents tackling complex multi-step work.

![FLUX.2 [klein] 4B](https://awesomeagents.ai/images/models/flux-2-klein-4b_hu_dec233f8cc7116ef.jpg)

Black Forest Labs' fastest open-source image generation model - 4B parameters, Apache 2.0 license, sub-second generation on consumer GPUs with 13GB VRAM.