MiniMax M2.7 Claims to Automate Its Own Training

MiniMax's new 2,300B MoE model tops the Artificial Analysis Intelligence Index and claims to run 30-50% of its own RL research workflow autonomously.

MiniMax's new 2,300B MoE model tops the Artificial Analysis Intelligence Index and claims to run 30-50% of its own RL research workflow autonomously.

Cursor launches Composer 2, its first in-house coding model trained via RL on long-horizon tasks, scoring 73.7 on SWE-bench Multilingual at $0.50/M input tokens.

Three new arXiv papers tackle constitutional AI rule learning, sleeper agent defense for multi-agent pipelines, and skill-evolving reinforcement learning for math reasoning.

New research shows enterprise AI agents top out at 37.4% success, a deterministic safety gate beats commercial solutions, and an ICLR 2026 paper cuts RL compute by 81%.

A Hugging Face survey of 16 open-source reinforcement learning libraries finds the entire ecosystem has converged on async disaggregated training to fix a single brutal bottleneck: GPU idle time during long rollouts.

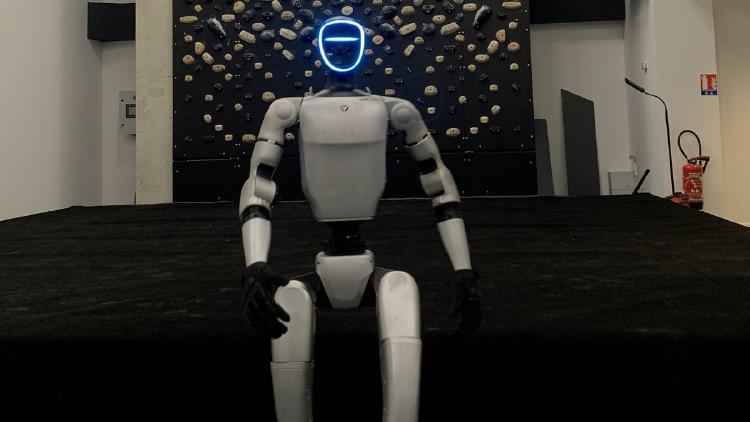

Hugging Face ships its largest LeRobot update yet: Unitree G1 humanoid support, Pi0-FAST VLA, Real-Time Chunking, 10x faster image training, and PEFT/LoRA fine-tuning for large robot policies.