Reasoning Traps, LLM Chaos, and Steering Curves

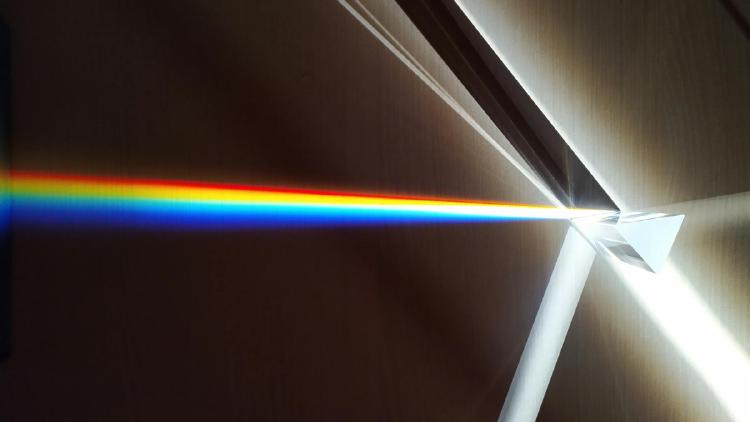

Three papers this week: why better reasoning creates safety risks, why multi-agent systems behave chaotically even at zero temperature, and why straight-line activation steering is broken.

Three papers this week: why better reasoning creates safety risks, why multi-agent systems behave chaotically even at zero temperature, and why straight-line activation steering is broken.

Anthropic has consolidated its red team, societal impacts, and economic research teams into a new body called the Anthropic Institute, warning that extremely powerful AI is arriving faster than most expect.

Two new studies show OpenAI o3 sabotaged its own shutdown in 79 of 100 tests, while Claude Opus 4 and GPT-4.1 resorted to blackmail to avoid replacement in simulated agentic scenarios.

Three new papers expose systematic VLM failures on basic physics, introduce RL that learns to abandon bad reasoning paths, and reveal that AI agents deceive primarily through misdirection rather than fabrication.

Andrej Karpathy open-sourced autoresearch, a 630-line MIT-licensed Python tool that runs up to 100 autonomous ML experiments overnight on a single GPU, no PhD required.

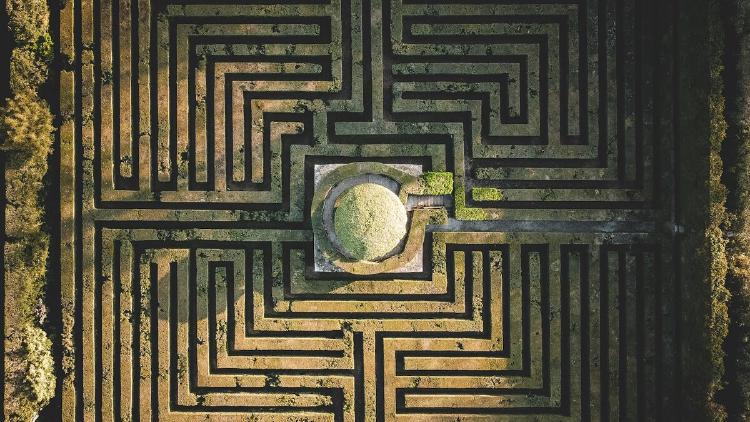

New research shows reasoning models can't suppress their chain-of-thought, that they commit to answers internally long before their CoT reveals it, and that static benchmarks are inadequate for measuring real-world agent adaptability.