Percepta Builds a Computer Inside a Transformer

Percepta AI compiled a WebAssembly interpreter into transformer weights, executing programs deterministically at 33K tokens/sec on CPU - but the community is skeptical about the practical value.

Percepta AI compiled a WebAssembly interpreter into transformer weights, executing programs deterministically at 33K tokens/sec on CPU - but the community is skeptical about the practical value.

OLMo Hybrid combines transformer attention with Gated DeltaNet to match OLMo 3 accuracy using 49% fewer tokens and 75% better throughput on long contexts. Fully open - weights, checkpoints, training code, and technical report.

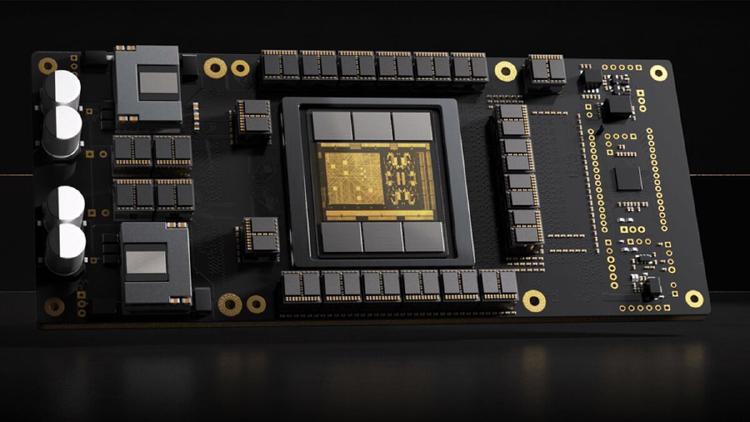

Full specs and critical analysis of the Etched Sohu - a transformer-specific ASIC claiming 500K+ tokens/sec on Llama 70B, built on TSMC 4nm with 144GB HBM3E. Bold claims, but no independent benchmarks yet.